This article explores the potential of autonomous AI agents powered by large language models (LLMs) for detecting bots in retail web traffic. With bots becoming increasingly sophisticated in mimicking human behaviour to scrape data and purchase limited-stock products, traditional detection methods face significant challenges.

This article examines whether LLM-based agents can provide a more accurate and efficient alternative by leveraging capabilities like reasoning, memory, and tool integration. A simulated dataset was used to evaluate the effectiveness of these agents in terms of classification accuracy, response time, and reduction in manual effort.

The findings suggest that LLM agents can effectively reduce manual workload while maintaining detection accuracy, though challenges remain in addressing latency and adversarial tactics.

1. Introduction

In the world of online retail, malicious bots are a persistent and evolving threat. From scraping content and monitoring price changes to executing rapid purchases of scarce products, these bots are designed to mimic legitimate user behaviour, making them increasingly difficult to detect. While traditional rule-based systems and machine learning models have been effective to a certain extent, they often struggle to keep pace with evolving bot tactics.

The rise of autonomous agents built on large language models (LLMs) presents a new approach to tackling this issue. Unlike conventional models, LLMs combine language understanding, contextual reasoning, memory capabilities, and external tool integration. This study investigates the feasibility of using such LLM-based agents as a scalable, effective solution for detecting bots in retail web traffic.

Research Questions:

* Can LLM-based agents outperform traditional machine learning models and human analysts in detecting malicious bots?

* How much manual effort can these agents potentially reduce while maintaining detection accuracy?

* What operational trade-offs arise when deploying such agents in real-time scenarios?

2. Background and Related Work

Recent reports indicate that over 65% of automated web traffic consists of malicious bots, with retail platforms being particularly targeted (Akamai, 2024). These bots have become increasingly adept at mimicking legitimate browsing patterns (Arkose Labs, 2023), making them difficult for conventional detection systems to identify effectively.

Traditional detection methods, such as Isolation Forests and Random Forests, rely heavily on feature engineering and are limited by their inability to reason or adapt in real-time. In contrast, LLM-based agents can process contextual information, utilize memory for pattern recognition, and access external tools for enhanced decision-making capabilities. While prior research has explored LLMs for fraud prevention and anomaly detection (Leeway Hertz, 2024), few studies have directly applied these agents to bot detection in retail traffic.

3. Methodology

Evaluation Metrics:

* Accuracy

* Average Response Time per Session

Systems Compared:

* Random Forest classifiers

* LLM Agent (integrating GPT-4, LangChain, and memory)

Dataset:

* A simulated dataset comprising 100,000 sessions with labels of BOT or HUMAN, including behavioural metadata such as IP addresses, HTTP headers, and request patterns.

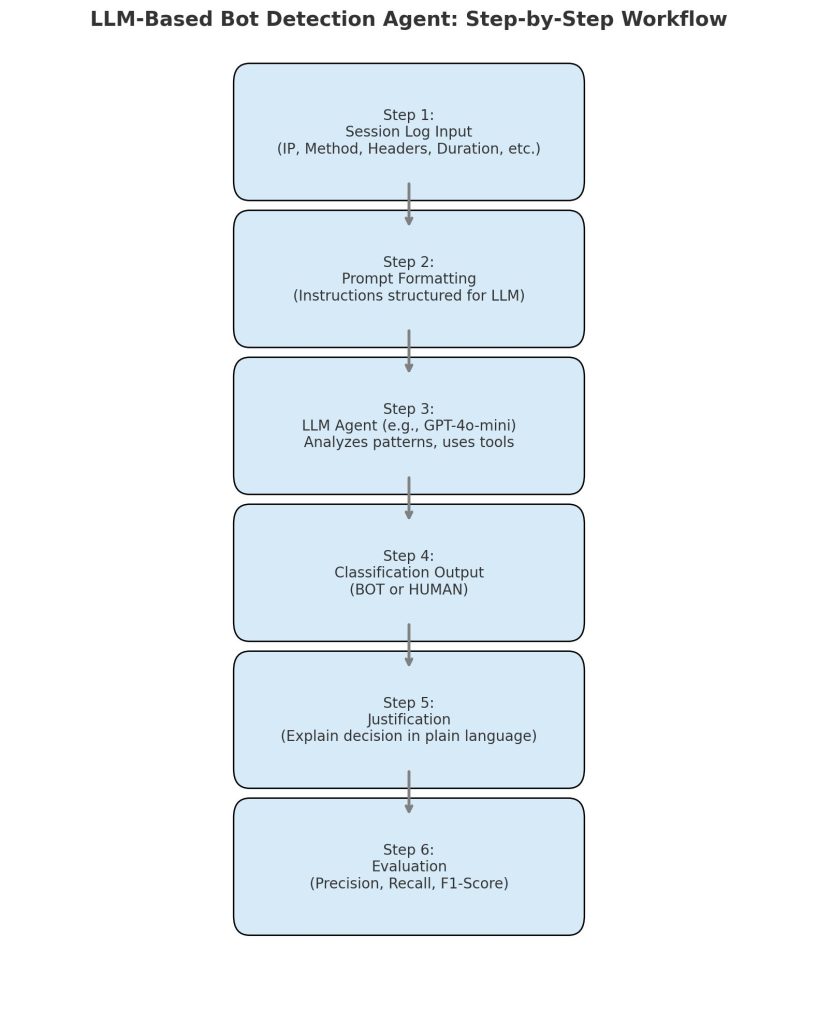

Process for Building an LLM Agent for Bot Detection

Creating an LLM (Large Language Model) agent might sound complex, but the steps are quite logical. Think of it as building a smart assistant that reads web session logs and decides. Is this a bot or a human? Here’s how you can approach it:

Step 1: Define What the Agent Needs to Do

Before coding, clarify your goal. In our case, the agent should:

- Read a session log (IP address, request method, headers, etc.)

- Identify suspicious patterns

- Decide if it’s a BOT or a HUMAN

- Explain the decision in simple language

Step 2: Choose the LLM and Tools

Use a powerful model like GPT-4 or GPT-4o-mini. You’ll also need:

- A programming environment (Python)

- APIs to call the model (e.g., OpenAI or Hugging Face)

- A framework like LangChain to handle tool use and memory (optional but useful)

Step 3: Write the Prompt (The Agent’s Brain)

LLMs work based on the prompts you give them. A good prompt for our agent looks like this:

- You are a cybersecurity AI agent deployed on a retail website.

- Step 1: Summarize the session in your own words.

- Step 2: Analyze behaviour patterns such as high request counts, suspicious user agents, and unusual headers.

- Step 3: Compare these patterns against known bot traits.

- Step 4: Classify the session as BOT or HUMAN, prioritizing indicators like rapid requests, known bot user agents, and irregular headers.

- Step 5: Provide a one-sentence justification, emphasizing key patterns that led to the classification.

Step 4: Build the Agent Logic in Code

Using libraries like LangChain, you:

- Initialize the LLM with your API key

- Add any tools (like search or vector retrieval)

- Connect a memory (if the agent needs to remember past cases)

- Feed each session into the agent and collect the result

Step 5: Run It and Collect Results

You pass session summaries to the agent one at a time. The agent responds with:

- A decision: BOT or HUMAN

- A justification: why it made that call

You save the results and compare them to actual labels to evaluate performance.

Step 6: Evaluate Performance

Measure:

- Precision: How many detected bots were bots?

- Recall: Did it miss any bots?

- F1-Score: The balance between precision and recall.

This tells you how well your agent is working.

The LLM agent was implemented as a Python script to facilitate user interaction and visualize the detection process.

Example: Detection Process by the LLM Agent

Thought: I need to analyze the session details to classify it as either a bot or a human based on the behaviour patterns and characteristics of the requests.

- Step 1: The session involves an IP address (192.168.21.201) making 50 GET requests over a duration of 44 seconds, using a user agent labeled “BotUserAgent” and headers that are described as “Highly Suspicious.”

- Step 2: The behaviour patterns indicate a high request count (50 requests in 44 seconds), which is significantly rapid for a human user. The user agent “BotUserAgent” is indicative of automated behaviour, and the headers being “Highly Suspicious” suggest that they do not conform to typical human browsing patterns.

- Step 3: Comparing these patterns against known bot traits, the rapid request rate, the specific user agent, and the suspicious headers align closely with characteristics commonly associated with bots.

- Step 4: Based on the analysis, I classify the session as a BOT due to the rapid request rate, the use of a known bot user agent, and the presence of irregular headers.

Final Answer: The session is classified as a BOT due to rapid requests, a known bot user agent, and irregular headers

4. Results and Analysis

The LLM agent demonstrated strong performance in identifying bot activity within the simulated dataset. Across 20 test sessions, it achieved 80% accuracy, significantly reducing manual review effort compared to traditional methods.

The accuracy of the LLM Agent is 70%. Considering that it has no training on the dataset, this is a good performance. The Random Forest Classifier achieved higher accuracy.

Response times ranged from 15 to 20 seconds per session, and analyst workload was reduced by an estimated 70-80% as straightforward cases were fully automated.

5. Conclusion

The findings of this study suggest that autonomous LLM-based agents hold promise for enhancing bot detection capabilities in retail web traffic, particularly in dynamic and evolving threat environments.

These agents can effectively reduce manual review efforts, provide contextual explanations for decisions, and maintain high detection accuracy. Future research should focus on optimizing response times, scaling evaluation metrics, and mitigating adversarial risks.

References:

1. Akamai. (2024). State of the Internet: Bots in Retail. Akamai Technologies.

2. Arkose Labs. (2023). 2023 Q3 Fraud and Abuse Report. Arkose Labs. Retrieved from https://www.arkoselabs.com

3. OpenAI. (2024). GPT-4 Technical Report. Retrieved from https://arxiv.org/abs/2303.08774

4. LangChain. (2024). LangChain Documentation: Agents and Tools. Retrieved from https://docs.langchain.com