Cloud security trends in 2024 highlighted how organizations manage risk. As environments became more complex because of various factors, visibility reduced, and exposure across systems expanded. However, the increased use of Generative AI gave rise to new risks, as quickly as it increased the speed, scale, and precision of existing attack paths. These changes explain how 2024 set the stage for the GenAI risk era.

Cloud Security in the Early Days of Generative AI

Cloud security in the early days of generative AI reflected accumulated complexity. Teams operated across distributed multicloud environments with inconsistent identity models, configurations, and policies. Identity inventories expanded to include users, service accounts, and machine workloads, often without full visibility.

Misconfigurations and excessive permissions remained common. Identity sprawl extended access beyond least privilege, while fragmented tools surfaced isolated alerts without connecting risk. Security teams could identify issues, but not always the attack paths they formed.

Generative AI entered this environment in an early, exploratory phase. Organizations tested limited use cases, while governance for LLM security remained incomplete. GenAI risk emerged within systems that already lacked unified control.

Multicloud Security as a Structural Risk

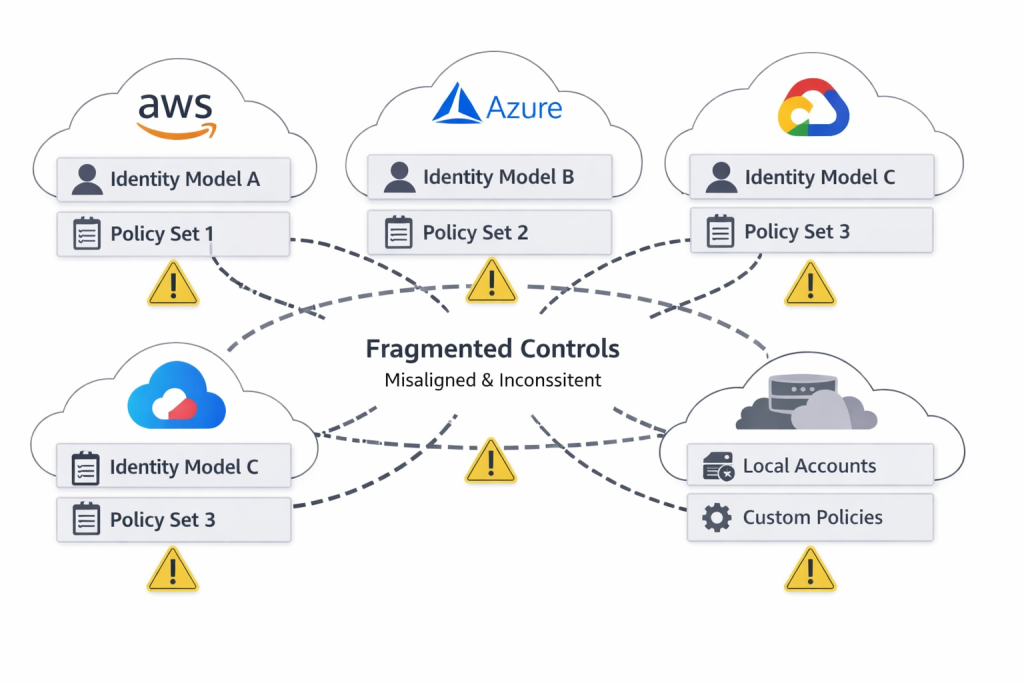

Multicloud security shifted from a resilience strategy to a source of structural risk. Organizations adopted multiple cloud providers to reduce dependency, but each provider introduced different access controls, identity frameworks, and configuration requirements. Security teams managed separate control planes that did not align with each other.

This fragmentation created operational friction. Teams validated policies in one environment but could not apply them consistently across others. Configuration drift increased as deployments evolved independently. Gaps between tools, teams, and environments reduced the ability to enforce consistent controls.

These conditions expanded the attack surface. Unmonitored assets and inconsistent policies created opportunities for exploitation. Multicloud complexity became a security problem in itself and a defining factor among the top cloud security trends in 2024.

Identity Security as the Control Plane

Identity security became the central control plane for cloud environments. Access decisions depended on identity rather than network boundaries. Permissions assigned to users, services, or workloads dictated every interaction, whether with infrastructure, applications, or data.

Industry analysis highlighted widespread permission sprawl and attack path exposure across multicloud environments. Excessive permissions allowed attackers to move laterally without exploiting infrastructure vulnerabilities.

This model concentrated risk. Once an attacker compromised an identity, that access could extend across multiple systems. Identity determined how far an attacker could move and what data they could reach.

Security teams strengthened identity security by implementing least-privilege access, continuous entitlement review, and improved visibility into identity relationships. These measures addressed systemic risk more directly than isolated infrastructure controls.

Fragmentation and the Rise of CNAPP

Security teams relied on multiple tools to manage cloud risk, each focused on a specific function such as posture management, workload protection, or vulnerability scanning. These tools lacked shared context, which limited visibility across environments.

This fragmentation made risk prioritization difficult. A low-severity misconfiguration could become critical when linked to a high-privilege identity, yet tools rarely surface these relationships.

CNAPP addressed this gap by unifying posture management, identity analysis, workload protection, and vulnerability management into a single model. This allowed teams to evaluate how risks intersect and to identify attack paths that expose sensitive data rather than reviewing isolated alerts.

The Shift Toward Prevention and Cloud Posture Management

The pace of cloud operations reduced the effectiveness of detection-focused strategies. Short deployment cycles meant misconfigurations could expose critical assets within narrow time windows. Detection models often missed these exposures, while alert volumes increased without improving prioritization.

Security leaders shifted toward prevention. Cloud posture management enforced policies before exposure and continuously validated configurations. Teams also analyzed attack paths to understand how identities, permissions, and vulnerabilities combined into exploitable conditions, shifting focus from isolated issues to systemic risk.

Generative AI Security in an Early Phase

Generative AI security emerged as a new domain, but it remained in an early stage of adoption. Organizations limited deployments to experimental or tightly controlled use cases. Governance frameworks for LLM security had not reached maturity.

Security teams focused on practical questions. They assessed how sensitive data might enter prompts, how to validate model outputs, and what controls should govern interactions between LLMs and internal systems.

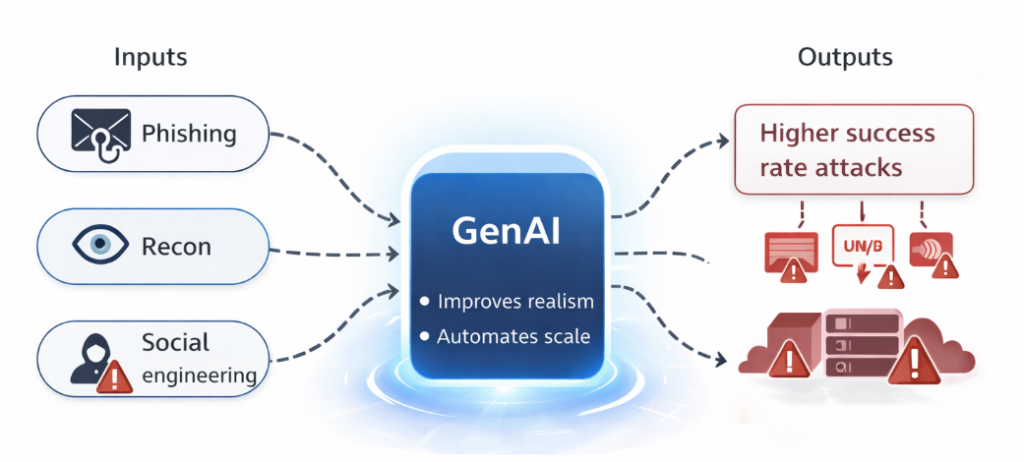

Security forecasts indicated that generative AI would increase the realism and scalability of phishing and social engineering. These developments improved attacker efficiency rather than introducing new attack categories. GenAI risk amplified existing weaknesses in identity and configuration.

Data Security and LLM-Specific Challenges

Generative AI introduced new considerations for data security. Large language models processed inputs that could include sensitive information, and outputs could expose unintended data.

Organizations lacked consistent policies for prompt data and output validation. Responsibility for data handling remained unclear across teams. Weak input validation increased the risk of unintended disclosure. Inconsistent governance enabled data to flow between cloud systems and AI applications without sufficient oversight.

LLM security frameworks emphasized data classification, access control, and monitoring. Adoption varied, and data security became a shared layer across cloud and AI systems.

Software Supply Chain Security in the Context of GenAI

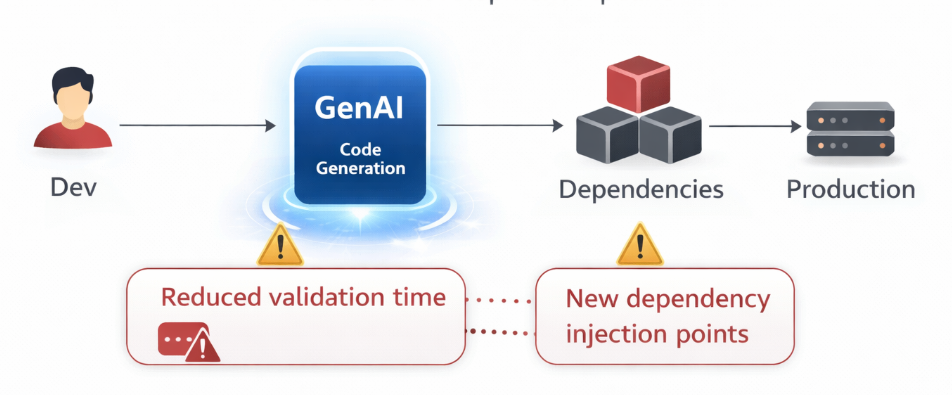

Software supply chain security continued to evolve alongside development practices. Code-generation tools increased development speed but introduced new dependencies into production environments.

This acceleration reduced the time available for validation and testing. Vulnerabilities could enter production systems if review processes did not keep pace.

Security teams extended existing controls to address assisted development workflows. This adjustment connected software supply chain security with generative AI adoption and reinforced the need for consistent validation.

Convergence of Cloud and GenAI Risk

Cloud security trends 2024 showed how identity, multicloud, and data security worked together. They’re no longer independent, and GenAI increased the speed at which these risks interact.

Because identity functions as the control plane, credential compromise enables access across systems. Multicloud environments introduce inconsistencies that expand the attack surface. Data security connects both layers, since cloud platforms and AI applications rely on shared sensitive data.

This convergence creates compound risk. A single compromised identity can expose data across multiple environments and enable further exploitation at greater speed. GenAI risk increases the efficiency of reconnaissance, phishing, and attack path analysis.

Strategic Implications for Security Leaders

Security leaders adjusted priorities to address these conditions. They strengthened identity security through least privilege access and continuous entitlement management. They adopted CNAPP to unify visibility and analyze interconnected risks. They expanded cloud posture management to prevent misconfigurations before exposure occurred.

They also integrated generative AI security into existing governance structures. This alignment reduced fragmentation between cloud and AI risk management. Controls for LLM security and data use align with broader security policies.

These changes reflect a move toward systemic control. Identity controls limit lateral movement. Cloud posture management reduces exposure windows. Integrated platforms improve prioritization and response.

From Fragmentation to Systemic Control

Cloud security in 2024 highlighted the transition from fragmented practices to coordinated risk management. Factors like multicloud complexity, identity sprawl, fragmented tooling, and software supply chain security challenges created an already strained security environment. When GenAI came, it sped up exploitation.

This progression explains how 2024 set the stage for the GenAI risk era. The shift resulted from the interaction between established weaknesses and emerging capabilities.

Organizations that align identity security, cloud posture management, and data security establish stronger control over systemic risk. Organizations that maintain fragmented or reactive approaches face increasing exposure. Cloud security now depends on understanding how risks connect across systems rather than evaluating them in isolation.

References:

- World Economic Forum. (2024, January). Global cybersecurity outlook 2024. World Economic Forum. https://www3.weforum.org/docs/WEF_Global_Cybersecurity_Outlook_2024.pdf

- Google Cloud. (2024, January). Cybersecurity forecast 2024. Google. https://services.google.com/fh/files/misc/google-cloud-cybersecurity-forecast-2024.pdf

- King, T. (2023, December). Artificial intelligence predictions from experts for 2024. Solutions Review. https://solutionsreview.com/artificial-intelligence-predictions-from-experts-for-2024/

- Microsoft. (2024). State of multicloud security risk report. Microsoft. https://info.microsoft.com/ww-landing-state-of-multicloud-security-report.html

- National Institute of Standards and Technology. (2023, January). Artificial intelligence risk management framework (AI RMF 1.0). NIST. https://nvlpubs.nist.gov/nistpubs/ai/nist.ai.100-1.pdf

- Weigand, S. (2024, January). 2024 tech predictions: Defenders, adversaries will fine-tune artificial intelligence to their advantage. SC Media. https://www.scworld.com/news/2024-tech-predictions-defenders-adversaries-will-fine-tune-artificial-intelligence-to-their-advantage

(Photo by Max Ilienerwise on Unsplash)