As Large Language Models (LLMs) and generative AI integrate into the software development lifecycle, the focus of quality assurance is shifting from traditional script maintenance to the integrity of release decisions.

While AI-driven tooling accelerates test creation, it introduces a “reliability gap”: automation that runs quickly but cannot always be trusted as a definitive release signal. To address this, engineering teams are adopting a stability-first approach that treats test automation as a rigorous reliability system rather than a collection of static scripts.

The Evolution of the Testing Oracle: From Static Assertions to AI-Perception

A fundamental challenge in automation is the “oracle problem” – the difficulty of programmatically determining if a test has truly passed or failed, especially in complex UIs. Historically, oracles relied on brittle, hard-coded assertions. In 2023, the industry is seeing a transition toward intelligent oracles that use machine learning to learn expected behavior patterns and flag only significant anomalies.

Modern visual AI tools now function as perceptual validators. Rather than performing pixel-by-pixel comparisons, which often trigger false positives due to minor CSS shifts or font anti-aliasing, these systems use computer vision to detect structural regressions like missing components or broken layouts. This capability allows teams to maintain speed even when UI requirements are fluid or adaptive.

| Oracle Category | Traditional Mechanism | AI-Enhanced Mechanism | Impact on QA Lifecycle |

| Functional Validation | Hard-coded expect() assertions. | LLM analysis of requirements vs. output. | Accelerates test case synthesis from user stories. |

| Visual Validation | Pixel-by-pixel comparison. | Deep learning-based perceptual validators. | Reduces false positives in visual regression. |

| Root Cause Analysis | Manual log inspection. | Automated log clustering and error pattern recognition. | Accelerates triage and reduces time-to-fix (MTTR). |

Deterministic Gates vs. Probabilistic Signals

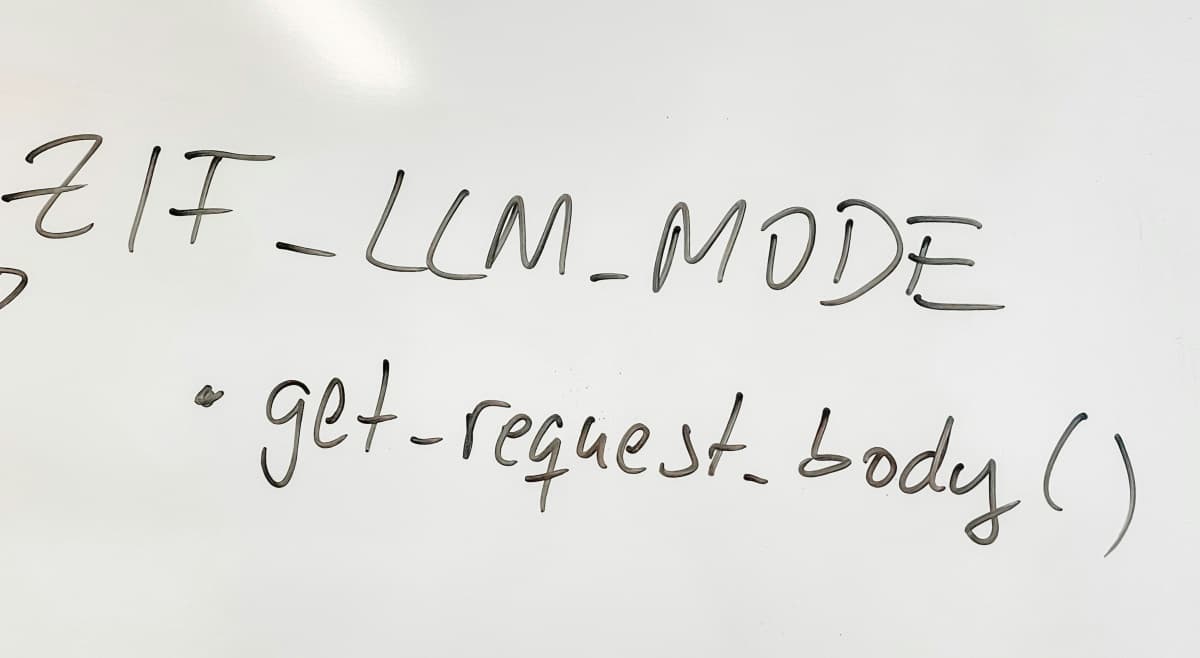

A core architectural principle for trust involves separating deterministic execution from probabilistic AI signals. In modern CI/CD pipelines, “deterministic gates” are the non-negotiable checks that block or allow a release.

- Deterministic Quality (Release Gates): This layer consists of unit, integration, and API contract tests that behave like precise measurement tools. They must be repeatable and idempotent – ensuring that parallel runs or environment jitter do not compromise the result.

- Probabilistic Quality (Signal Generators): This is where LLMs excel. AI can draft test cases from specifications, suggest candidate locators, or summarize complex failure logs. However, these are treated as “suggestions” that require human or deterministic validation before they can block a release.

SRE Principles for Automation: The Flakiness Budget

As test suites grow, they begin to exhibit the complexity of production systems. Engineering teams are increasingly applying Service Reliability Engineering (SRE) principles – specifically Service Level Objectives (SLOs) and Error Budgets – to manage automation reliability.

A “Flakiness Budget” is a policy-driven approach to non-deterministic behavior. If a test suite exceeds a predefined failure rate (e.g., more than 0.5% flaky failures per week), the team treats the breach as a production incident. This shifts the culture from “rerun until it passes” to root-cause prevention.

The standard formula for an error budget is: Error Budget = (100% - SLO%) × Total Events in Period

Advanced Triage: Bayesian Failure Scoring (BFS)

To technically mitigate flakiness, researchers have proposed models like the Bayesian Flakiness Score (BFS). This approach uses Bernoulli outcome histories to calculate a probabilistic score for each failing test.

A high BFS indicates a high likelihood of flakiness based on historical “pass/fail” streaks and environment signals (such as CPU pressure or network latency), while a low score suggests a genuine fault. This allows pipelines to automatically prioritize certain tests for re-runs while routing likely regressions directly to engineers for immediate investigation.

Selector Stability and the Locator Hierarchy

The durability of AI-assisted tests depends on the choice of element locators. AI agents that generate scripts based on brittle CSS or deep XPath chains create a maintenance burden. Stability-first frameworks enforce a hierarchy that prioritizes semantic and user-facing attributes over implementation details.

- Role Selectors & ARIA Labels: Selecting by functional roles (e.g., button, checkbox) ensures the test validates the application as a user (or screen reader) perceives it.

- data-testid Attributes: Custom attributes explicitly defined for testing decouple automation from styling. While some libraries treat these as a last resort, they remain a stable “escape hatch” for complex components where roles are ambiguous.

- Brittle Selectors (Avoid): Deeply nested CSS or auto-generated IDs that change with every build are avoided to ensure the test survives UI refactors.

Security and Governance: The OWASP Top 10 for LLMs

The integration of AI into the QA pipeline introduces unique security risks that must be addressed at the architecture level. The OWASP Top 10 for LLM Applications identified critical vulnerabilities such as Prompt Injection (LLM01) and Insecure Output Handling (LLM02).

In a QA context, “Insecure Output Handling” is particularly relevant; if a team accepts AI-generated test code without scrutiny, it could lead to the execution of malicious scripts within the internal build environment. Mitigation strategies include implementing human-in-the-loop validation for all AI-generated artifacts and enforcing strict role-based access controls for LLM-directed processes.

Conclusion: Confidence per Minute

The objective of LLM-assisted automation is not simply to generate more code, but to increase “confidence per minute”. By embedding machine learning within a framework of SRE-driven budgets and deterministic release gates, organizations can leverage the speed of AI without sacrificing the integrity of their release decisions.

In this model, the human tester’s role evolves from a script writer to a supervisor of intelligent systems – focusing on high-level risk analysis while the AI handles the repetitive triage of a high-velocity pipeline.

Featured Photo by Bernd Dittrich on Unsplash