AI features embedded within web applications often exhibit contextual discontinuity. They repeat questions already resolved, misinterpret the active view, and disregard selected records or applied filters. The underlying model may be capable, yet the surrounding integration introduces inconsistency that manifests as unpredictable behavior.

In most cases, the model is not the real problem. The friction shows up at the boundary between your application state and the model input.

Modern web applications already hold rich runtime state. Navigation context, selected entity IDs, draft inputs, filters, and recent actions are all sitting in memory. But many AI integrations flatten that structured state into one long prompt and expect the model to figure everything out from there.

This architectural gap becomes more visible as organizations embed AI deeper into their products. Generative AI disruption in software systems shows that product value depends on integration discipline rather than feature novelty. When AI has no clear view of the application state, its behavior starts to drift.

The solution is architectural. AI should be treated as a first-class runtime consumer of structured application state, not as an external chatbot awkwardly layered on top of the interface.

Reframing AI as a First-Class Runtime Consumer of Application State

Before we talk about patterns, it helps to look closely at where context actually disappears. Once you see that boundary clearly, the runtime state should flow to the AI.

Where Context Disappears: The Prompt Boundary

Applications already model user interaction in a structured form. User interaction data modeling describes the relationships among user, action, target object, and the UI hierarchy. Activity name, timestamp, input value, and object position form typed entities rather than loose text fragments.

That modeling discipline matters because the moment structured interaction turns into a block of prompt text, the system loses precision. The UI continues to render from explicit state transitions. The model, on the other hand, has to interpret a description of that state. That mismatch introduces ambiguity at the integration layer.

AI as Another Subscriber to Structured State

AI should behave like any other runtime consumer. Rendering engines, analytics systems, and logging pipelines subscribe directly to structured state. They do not try to reconstruct it from descriptive text.

Research on web interaction log analysis shows that interaction signals can be captured, segmented, and meaningfully replayed. Navigation transitions, selection changes, and dispatch events form deterministic signals inside the runtime.

If the UI depends on structured state for predictability, AI should consume that same structured representation.

Deterministic Context Flows

AI behaves more consistently when its input comes from explicit transitions, versioned snapshots, and clearly bounded domains. Prompt tuning often tries to compensate for structural gaps. A well-designed runtime removes that need.

When invocation is driven by state transitions, the model responds to real context instead of a rough narrative description.

The Interaction Context Layer Pattern

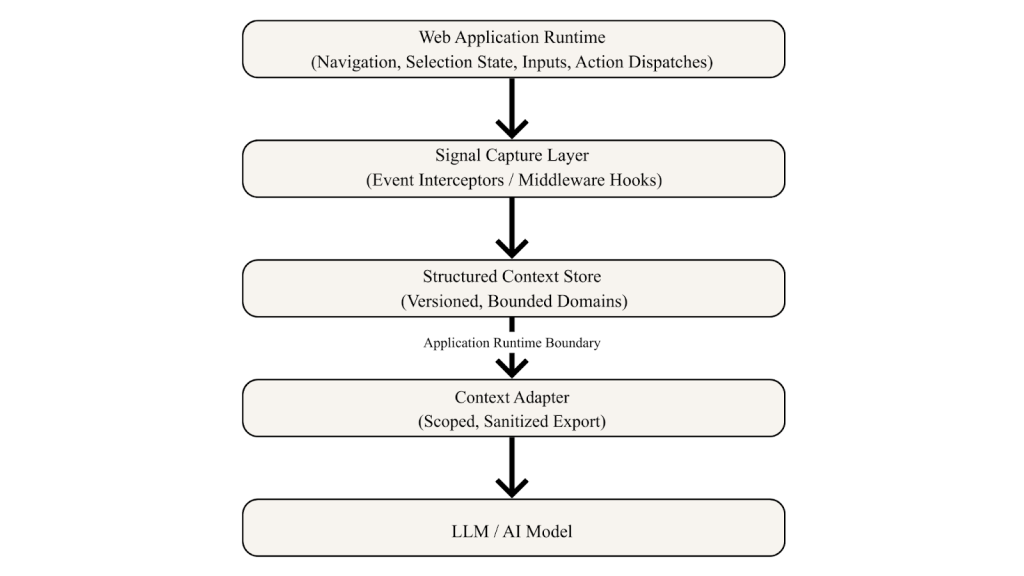

If you want this to work cleanly, you need a dedicated abstraction. The Interaction Context Layer (ICL) formalizes how runtime state flows toward the model.

The ICL doesn’t add new business logic. What it adds is separation. Signal capture, context storage, and model communication become distinct responsibilities.

This mirrors principles found in context-aware application architecture, where context lifecycle management and scoped access strengthen system reliability.

The ICL performs five core functions:

- Maintain a structured, versioned context store.

- Decouple runtime state from specific AI provider APIs.

- Normalize raw events into schema-driven context fields.

- Enforce scoped and sanitized export before model invocation.

- Capture meaningful interaction signals without modifying feature logic.

By isolating these responsibilities, teams avoid tight coupling between frontend components and model requests.

This model illustrates that interaction data can be represented as explicit entities. User intent originates in structured activity, not in prompts. Capturing that structure creates a stable foundation for AI integration.

Capturing Signals Without Polluting Business Logic

Signal capture must occur at deterministic interception points. Middleware hooks, router listeners, action dispatchers, and event emitters provide stable surfaces. They observe behavior without rewriting feature code.

A disciplined capture layer promotes only meaningful transitions into context. It doesn’t record every keystroke.

For example:

- A filter adjustment updates appliedFilters.

- A route change updates activeWorkspaceId.

- A selection event updates selectedRecordIds.

- A draft edit toggles editingMode and increments a version counter.

This step turns scattered UI events into stable, well-defined context fields. Versioning also matters. Each meaningful transition increments a context version. That makes it easier to reproduce behavior, trace issues, and control when the model runs.

Structuring Context for Predictable Consumption

A structured context store should segment bounded domains. Billing state remains separate from editor state. Search filters remain independent from identity context.

Keeping domains bounded prevents context bloat and makes export scoping far simpler.

Two propagation strategies typically apply. Snapshot-based delivery sends a complete, sanitized context at invocation time. This approach simplifies debugging and reduces complexity. Incremental propagation streams only meaningful deltas. This approach supports long-lived assistants but requires careful buffering.

Reliable execution depends on predictable state transitions. The model receives explicit fields such as activeInvoiceId or selectedCount. It does not need to interpret screen context from a paragraph of text.

Memory-safe buffering protects performance. Context pruning removes stale domains. Debounced updates prevent unnecessary invocation.

The Adapter Boundary: Scoped and Sanitized Export

The point where runtime state leaves the application and reaches the model determines how safe and reliable the system remains.

Security analysis of LLM application attack surfaces highlights risks introduced when unscoped or unsanitized data leaves the runtime. Injection vulnerabilities, sensitive data exposure, and output manipulation can occur when boundaries lack clarity.

The Context Adapter enforces this boundary. It performs a scoped export of relevant domains. It removes sensitive or irrelevant fields. It validates schema consistency before invocation. It transforms structured context into provider-agnostic payloads.

Raw logs shouldn’t pass through. Secret tokens shouldn’t propagate. Implicit state shouldn’t leak across layers.

Clear separation here improves reliability and keeps the integration aligned with established security practices.

The diagram illustrates deterministic flow from runtime state to controlled model exposure. It emphasizes separation between internal state management and external AI consumption.

Performance and Operational Realism

Embedding AI into production systems requires operational discipline. McKinsey’s analysis of the economic potential of generative AI underscores that value realization depends on integration quality rather than novelty.

Performance considerations include debouncing context updates, limiting snapshot size, batching model calls, expiring stale versions, and logging schema versions instead of full payload content.

Large-scale frontend systems already manage complex state and logging pipelines. Experience building reusable logging frameworks with masking capabilities demonstrates that disciplined interception and validation improve traceability and protect sensitive information. The same discipline applies to AI context modeling.

Careful design prevents runaway invocation, performance degradation, and unclear state transitions.

From Bolt-On Feature to Native Runtime Participant

When AI consumes structured runtime state, reliability improves. Repetitive clarification decreases because the selection context becomes explicit. Incorrect assumptions decline because the navigation state remains visible. Predictability increases because invocation triggers derive from deterministic transitions.

You don’t need to rebuild your application from scratch. You need to introduce a structured Interaction Context Layer. Treat AI as another runtime subscriber. Capture signals intentionally. Structure context deterministically. Enforce scoped export at the boundary.

If the AI inside your application feels disconnected, resist the urge to tweak the system prompt again. Instead, step back and inspect your runtime model. Map your interaction signals. Identify bounded context domains. Introduce a structured adapter layer.

Stable AI behavior comes from runtime design, not from rewriting prompts.

References:

- Abb, L. and Rehse, J.-R. (2022). A reference data model for process-related user interaction logs. arXiv preprint arXiv:2207.12054. https://doi.org/10.48550/arXiv.2207.12054.

- Abb, L. and Rehse, J.-R. (2024). Process-related user interaction logs: State of the art, reference model, and object-centric implementation. Information Systems. https://doi.org/10.1016/j.is.2024.102386.

- McKinsey & Company. (2023). Navigating the generative AI disruption in software. McKinsey & Company.https://www.mckinsey.com/industries/technology-media-and-telecommunications/our-insights/navigating-the-generative-ai-disruption-in-software.

- McKinsey & Company. (2023). What’s the future of generative AI? An early view in 15 charts. McKinsey & Company. https://www.mckinsey.com/featured-insights/mckinsey-explainers/whats-the-future-of-generative-ai-an-early-view-in-15-charts

- OWASP Foundation. (2023). OWASP Top 10 for large language model applications 2023 v1.1.https://owasp.org/www-project-top-10-for-large-language-model-applications/assets/PDF/OWASP-Top-10-for-LLMs-2023-v1_1.pdf.

- Ponce, V. and Abdulrazak, B. (2022). Context-aware end-user development review. Applied Sciences 12(1): 479.https://doi.org/10.3390/app12010479.

- Featured Photo by Omar: Lopez-Rincon on Unsplash